This is the best summary I could come up with:

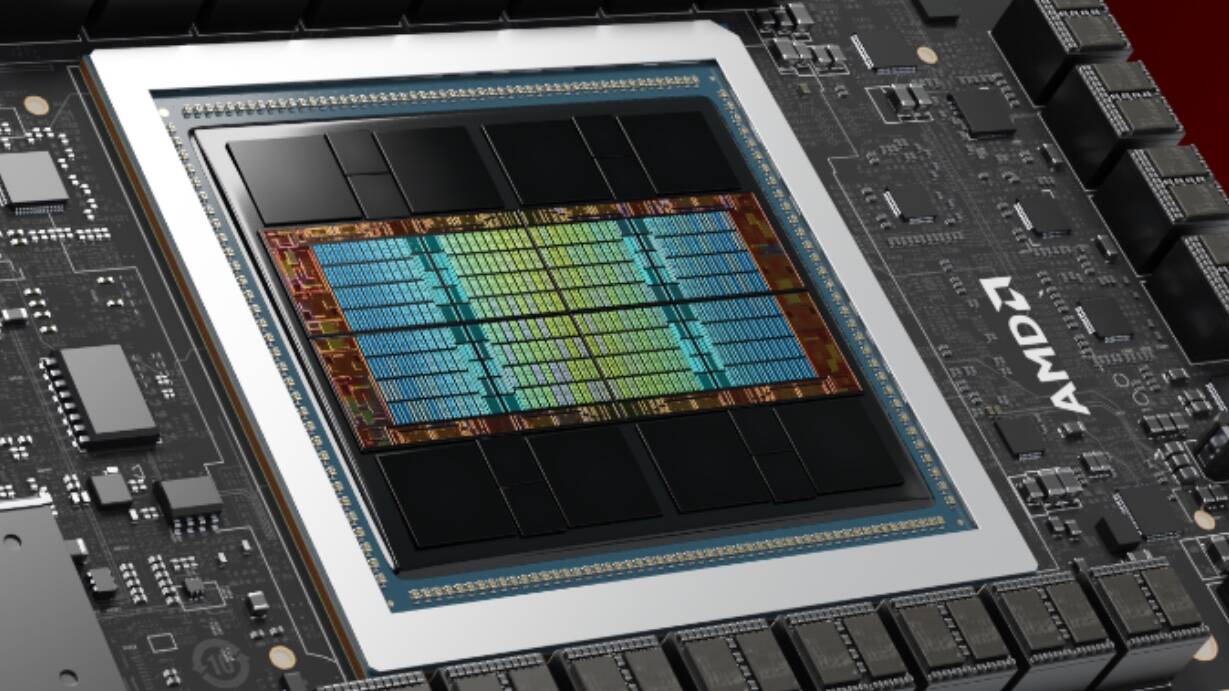

The Instinct MI325X, at least from what we can tell, is a lot like Nvidia’s H200 in that it’s a HBM3e-enhanced version of the GPU we detailed at length during AMD’s Advancing AI event in December 2023.

Despite hardware support for FP8 being a major selling point of the MI300X when it launched, AMD has generally focused on half-precision performance in its benchmarks.

For a lot of its benchmarks, AMD is relying on vLLM – an inference library which hasn’t had solid support for FP8 data types.

But if your main concern is getting a model to run on as few GPUs as possible and you can not only drop to lower precision but double your floating point throughput, it’s hard to see why you wouldn’t.

AMD isn’t oblivious to the fact Nvidia’s Blackwell parts hold the advantage, and to better compete the House of Zen is moving to a yearly release cadence for new Instinct accelerators.

According to AMD, CDNA 4 will stick to the same 288GB of HBM3e config as MI325X, but move to a 3nm process node for the compute tiles, and add support for FP4 and FP6 data types – the latter of which Nvidia is already adopting with Blackwell

The original article contains 1,113 words, the summary contains 204 words. Saved 82%. I’m a bot and I’m open source!