deleted by creator

Sigh, not this article again. No, they can’t “deepfake a person with one photo”. They can create a bad uncanny-valley 75% accurate version of one.

a bad uncanny-valley 75% accurate version of one

Actually a perfect description of what a deepfake is.

The actual research page is so awkward. The TLDR at the top goes:

single portrait photo + speech audio = hyper-realistic talking face video

Then a little lower comes the big red warning:

We are exploring visual affective skill generation for virtual, interactive characters, NOT impersonating any person in the real world.

No siree! Big “not what it looks like” vibes.

Why would you develop this technology I simply don’t understand. All involved should be sent to jail. What the fuck.

They worded the headline that way to scare you into that reaction. They’re only interested in telling you about the negative uses because that drives engagement.

I understand AI evangelists - which you may or may not be idk - look down on us Luddites who have the gall to ask questions, but you seriously can’t see any potential issue with this technology without some sort of restrictions in place?

You can’t see why people are a little hesitant in an era where massive international corporations are endlessly scraping anything and everything on the Internet to dump into LLM’s et al to use against us to make an extra dollar?

You can’t see why people are worried about governments and otherwise bad actors having access to this technology at scale?

I don’t think these people should be locked up or all AI usage banned. But there is definitely a middle ground between absolute prohibition and no restrictions at all.

This is unnecessarily aggressive, I don’t need this today.

And your comment was unnecessarily patronizing IMO. Do you think they needed that today?

If you don’t want people to respond to your takes then don’t post them in public forums. I am critiquing your stance. If it’s overly aggressive than I apologize for the tone.

I saw what you wrote before your edits. I’m not going to engage with people who talk like that. Good day.

I can’t control that you saw my comments seconds after they were posted but before the 20-30s it takes for me to edit them. There is nothing i changed that drastically for you to imply I was being deceptive.

Have a good one.

That was one of the tamest comments I’ve seen on the internet. There wasn’t even remarks about your mom.

None of those concerns are new in principle: AI is the current thing that makes people worry about corporate and government BS but corporate and government BS isn’t new.

Then: The cat is out of the bag, you won’t be able to put it in again. If those things worry you the strategic move isn’t to hope that suddenly, out of pretty much nowhere, capitalism and authoritarianism will fall never to be seen again, but to a) try our best to get sensible regulations in place, the EU has done a good job IMO, and b) own the tech. As in: Develop and use tech and models that can be self-hosted, that enable people to have control over AI, instead of being beholden to what corporate or government actors deem we should be using. It’s FLOSS all over again.

Or, to be an edgelord to some of the artists out there: If you don’t want your creative process to end up being dependent on Adobe’s AI stuff then help training models that aren’t owned by big CGI. No tech knowledge necessary, this would be about providing a trained eye as well as data (i.e. pictures) that allow the model to understand what it did wrong, according to your eye.

I said:

I don’t think these people should be locked up or all AI usage banned. But there is definitely a middle ground between absolute prohibition and no restrictions at all.

I have used AI tools as a shooter/editor for years so I don’t need a lecture on this, and I did not say any of the concerns are new. Obviously, the implication is AI greatly enables all of these actions to a degree we’ve never seen before. Just like cell phones didn’t invent distracted driving but made it exponentially worse and necessitated more specific direction/intervention.

They mentioned one potential use that I thought has value and that I hadn’t considered. For video conferencing, this could transmit data without sending video and greatly reduce the amount of bandwidth needed by rendering people’s faces locally. I don’t think that outweighs the massive harms this technology will unleash. But at least there was some use that would be legit and beneficial.

I’m someone who has a moral compass and I don’t like that scammers will abuse this shit so I hate it. But there’s no keeping it locked away. It’s here to stay. I hate the future / now.

Also I would argue sending the actual video of what is happening in front of the camera is kind of the entire point of having a video call. I don’t see any utility in having a simulated face to face interaction where neither of you is even looking at an actual image of the other person.

Wouldn’t you then have to run the AI locally on a machine (which probably draws a lot of power and memory) or use it via cloud (which depends on bandwidth just like a video call). I don’t really see where this technology could actually be useful. Sure, if it is only a minor computation just like if you take a picture/video with any modern smartphone. But computing an entire face and voice seems much more complicated than that and not really feasible for the usual home device.

Yeah, it’s not practical right now, but in 10 years? Who knows, we might finally have some built-in AI accelerator capable of running big neural networks on consumer CPUs by then (we do have AI accelerators in a large chunk of current CPUs, but they’re not up to the task yet). The system memory should also go up now that memory-hungry AI is inching closer to mainstream use.

Sure, Internet bandwidth will also increase, meaning this compression will be less important, but on the other hand, it’s not like we’ve stopped improving video codecs after h.264 because it was good enough - there are better codecs now even though we have the resources to handle bigger h.264 videos.

The technology doesn’t have to be useful right now - for example, neural networks capable of learning have been studied since the 1940s, even though there would be no way to run them for many decades, and it would take even longer to run them in a useful capacity. But now that we have the technology to do so, they enjoy rapid progress building on top of that original foundation.

A model that can only generate frontal to profile views of heads would be quite small, I can totally see that kind of thing running on current consumer GPUs, in real time. Near real time is already possible with SDXL-based models with some speedup tricks applied as long as you have a mid-range gaming GPU and those models are significantly more general. It’s not like the model would need to generate spaghetti and sports cars alongside with the head.

You can’t simply not develop a technology. Progress is going to move forward. If they don’t do it, somebody else is going to figure out how. The tools are out there. The math works. Better researchers to do it now and scare us into finding solutions than criminals to develop it first.

Other than the obvious malicious uses of this technology, it could be great for multimedia, great for creative control for cast, great for virtual meetings to always look “your best” (as determined by each individual, e.g. clean-cut pristine, and/or preferred gender, and/or favorite anime, etc.). There are also use cases to hear letters spoken by a lost loved one, or replace the Three Stooges with politicians. Tons of “safe” use cases that I am looking forward to.

I’m not convinced any of these uses are actually beneficial. They mostly range from creepy to pointless.

Entertainment might be pointless to some. I dream of having an on-demand Netflix that will generate whatever type of content I can imagine on demand, or better yet already know my preferences and all I have to do is tell it my mood and it will start playing something I would like.

A difference in goals, I guess. Having programs generated just to pander to my existing tastes sounds horrible to me. I want to be challenged and surprised and have my tastes tested and changed in unpredictable ways. I also want to watch stuff that’s written by humans and acted by humans, because there’s a sense of shared life there that there isn’t in an AI-generated video.

It’s also then just one step removed from refusing to accept any friends or romantic partners who don’t do exactly what you want at all times because life is supposed to be tailored to you.

I think this has an effect most people don’t think of: Media will just lose it’s value as a trusted source for information. We’ll just lose the ability of broadcasting media as anything could be faked. Humanity is back to “word of mouth”, I guess.

deleted by creator

What can possibly go wrong?

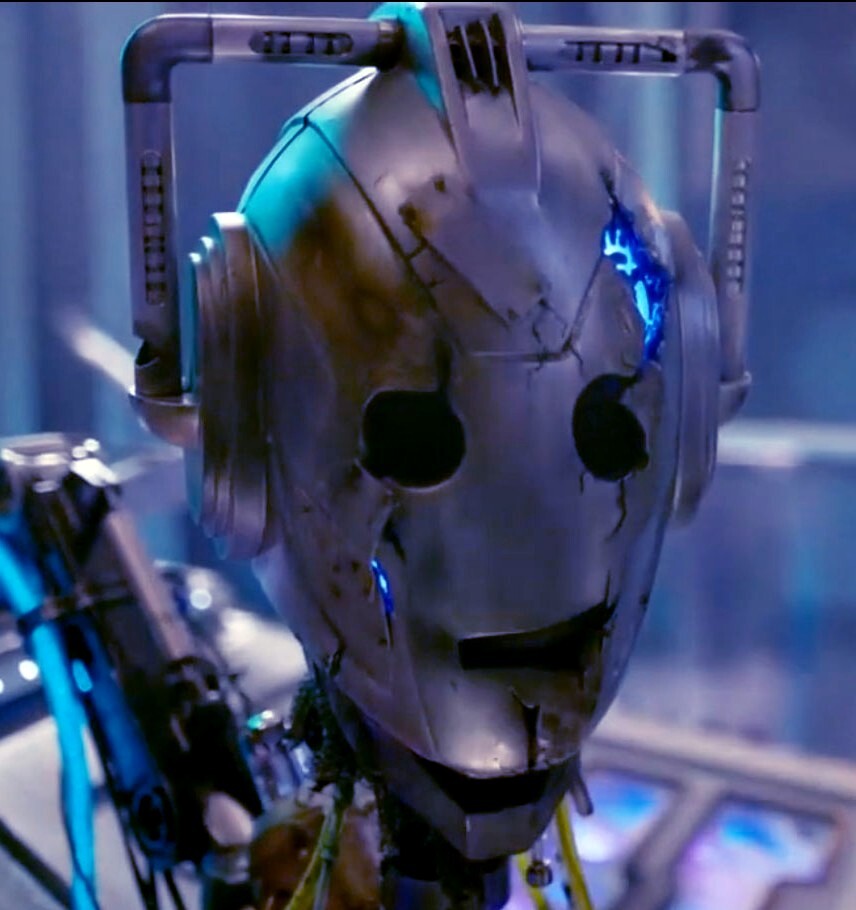

The eyes still have uncanny valley vibes, but that’s because I’m looking for it. If I wasn’t watching demo videos about generated video, I might not have noticed.

And that’s the problem. The average person isn’t looking for it, and will absolutely not see it. As long as it’s good enough, that’s all that matters. A plausible enough video of Joe Biden talking about rounding up Christians into internment camps that gets shared on Facebook, or something like that which panders to right-wing bigotry, is enough to get people going. Even real images and videos that are miscaptioned are enough, and even when a link is there that disproves the caption.

People seriously underestimate just how horrifying the possibilities are with this shit. And as high stakes as this election cycle is, and the state of politics in this country, the tendency for people to latch on to anything that affirms their preexisting ideals creates a fucking minefield

Trained on YouTube clips

It could have been worse. Imagine trained by Tik Tok clips.

Someone help me out please. Who was the 90s sci-fi author who predicted actors would go away and all movies would be made using cgi /ai? She had characters in the book, watching movies starring Humphrey Bogart and John Wayne, as detectives solving crimes (and so on). She also predicted “ractors”, people who act in front of a camera, so a computer can use their motion and expressions to animate a character on screen in real time.

My feeble brain, I swear… In any case, thanks to her, knew this day was coming. Gonna be a wild ride though.

According to Le Chat,

The author you’re thinking of is Neal Stephenson, and the book is “Snow Crash” published in 1992. In the book, he coined the term “ractors” for actors who perform in front of motion-capture cameras to create lifelike animations. He also predicted the use of CGI and AI in filmmaking to create movies with long-dead actors.

I haven’t read it and the Wikipedia article doesn’t seem to mention virtual actors, so it could be wrong. At least it didn’t hallucinate a fake book.