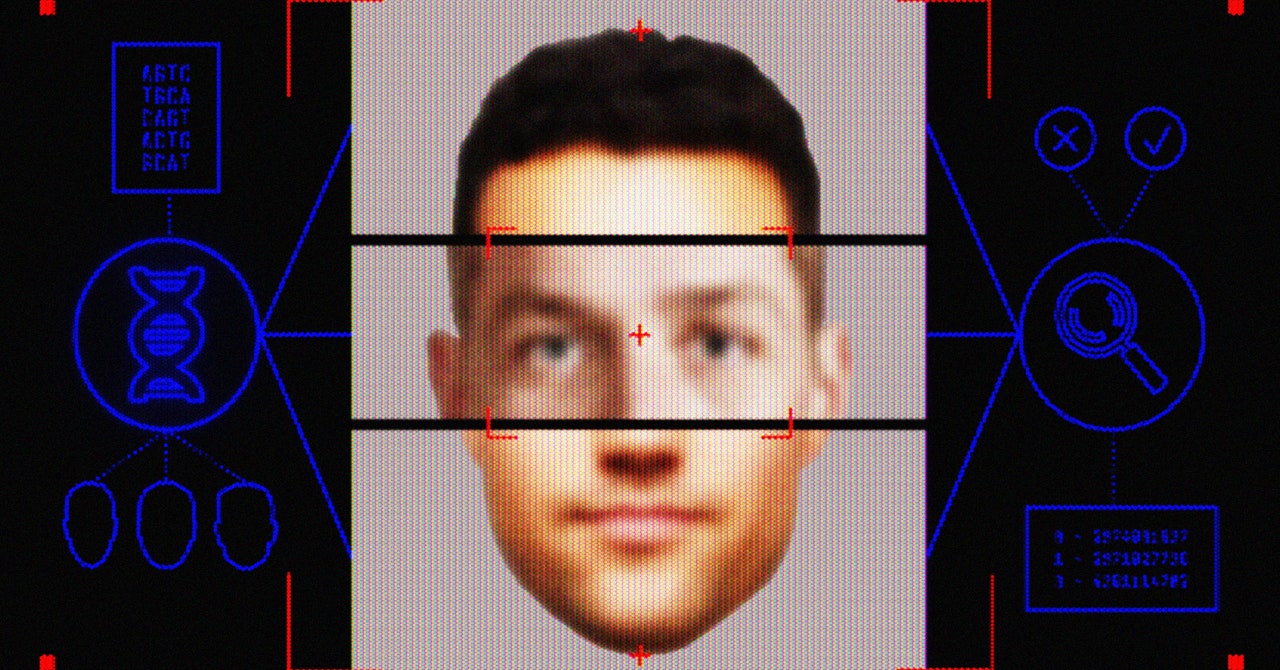

For facial recognition experts and privacy advocates, the East Bay detective’s request, while dystopian, was also entirely predictable. It emphasizes the ways that, without oversight, law enforcement is able to mix and match technologies in unintended ways, using untested algorithms to single out suspects based on unknowable criteria.

Cops only like technology when they can abuse it to avoid having to do real investigative police work.

They don’t care to understand the technology in any deep manner, and as we’ve seen with body cams, when they retain full control over the technology, it’s basically a farce to believe it could be used to control their behavior.

I mean, on top of that, a lot of “forensic science” isn’t science at all and is arguably a joke.

Cops like using the veneer of science and technology to act like they’re doing “serious jobs” but in reality they’re just a bunch of thugs trying to dominate and control.

In other words, this is just the beginning, don’t expect them to stop doing stuff like this, and further, expect them to start producing “research” that “justifies” these “investigation” methods and see them added to the pile of bullshit that is “fOrEnSiC sCiEnCE.”

After the murder of Michael Brown, body cams were lauded by centrists as a way to prevent police from unlawfully killing people. And there’s never been a single police shoo- oh wait

TBH: Tech companies are not much different from how you described cops.

They don’t usually bother to learn the tech they are using properly and take all the shortcuts possible. You see this by the current spout of AI startups. Sure, LLMs work pretty good. But most other applications of AI is more like: “LOL, no idea how to solve the problem. I hooked it up to this blackbox, which i don’t understand, and trained it to give me the results i want.”