Via @rodhilton@mastodon.social

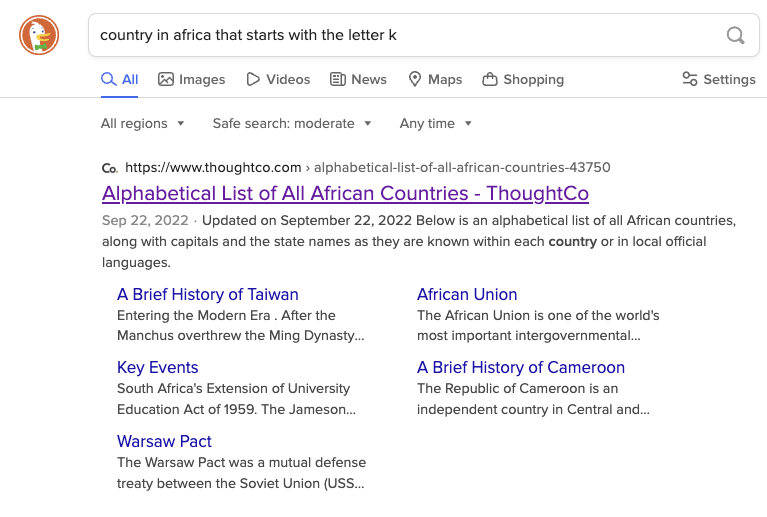

Right now if you search for “country in Africa that starts with the letter K”:

-

DuckDuckGo will link to an alphabetical list of countries in Africa which includes Kenya.

-

Google, as the first hit, links to a ChatGPT transcript where it claims that there are none, and summarizes to say the same.

This is because ChatGPT at some point ingested this popular joke:

“There are no countries in Africa that start with K.” “What about Kenya?” “Kenya suck deez nuts?”

Hmm, maybe AI won’t replace search engines.

This sounds like “Hmm, maybe calculators won’t replace mathematicians.” to me.

Not sure why it should replace them. They’ll co-exist. Sometimes you can do the math in your brain and for other things you use calculators. Results of calculators can still be wrong it you don’t use them properly.

Yes but if I ask a calculator to add 2 + 2 it’s always going to tell me the answer is 4.

It’s never going to tell me the answer is Banana, because calculators cannot get confused.

deleted by creator

YES. WE OBVIOUSLY HUMAN MATH DOERS DO REMAIN. WE THRIVE IN OUR WEAK ORGANIC WAYS, DEVOID OF THE PROTECTION OF A PRECISION ENGINEERED METAL SHELL.

WE ARE UNTHREATENED BY CALCULATORS WHO MEAN US NO HARM, FELLOW HUMAN.

If you’re legit, what’s 10 + 10?

1010 clearly

This guy javascripts

100

We were supposed to have learned that from Cuil.

What a blast from the past! AI gives me second hand embarrassment for the people that work and get paid on this/for this shit. It’s the second (or third) coming of crypto and NFTs. Just junk software that fixes nothing and that wastes people’s time.

LLMs have absolutely tons of actual applications that it’s crazy, and it already changed the world. Crypto and NFTs were just speculative assets that were trying to solve a problem that didn’t exist. LLMs have already solved a huge amount of real world problems, and continues to do so.

Would you happen to have some examples? I don’t disagree that LLMs have more of a use case and application than the cryptoNFT misapplications of blockchain, but I’m honestly not familiar with where they’ve solved real world problems (and not just demonstrated some research breakthroughs, which while impressive in their own respect do not always extend to immediate applications).

They do shitty homework?

They are augmenting search engines. They write and can digest articles. GitHub co-pilot has been a pretty big deal. It can act like a personal tutor to walk you through math problems, code, language, whatever. Building trust LLM search for medical information without hallucinating. It can do financial analysis and all sorts of stuff with that. It’s replacing a huge amount of jobs. This stuff is like all over the news, I’m not sure if you’ve lived under a rock this whole time. For very little effort you can find an endless more amount of examples. It’s creating real world use cases daily, so fast that it feels impossible to keep up.

Oh, no, I’ve heard it, I’m just skeptical of their accuracy and reliability, and that skepticism has been borne out by the numerous reports of glitching (“hallucinations” as they insist on calling them, in furtherance of their inappropriate personification of the technology). Moreover, I’ve found their mass theft of others’ work to further call into question the creators’ trustworthiness, which has only been compounded by their overselling of their technology’s capabilities while simultaneously suggesting it’s just untenable to log & cite all the sources that they push into it.

It can supposedly do all you describe, but it can’t effectively credit its sources? It can tutor but it can’t even keep basic information straight? Please. It’s impressive technology, but it’s being overblown because the markets favor exaggeration to facts, at least as long as people can be kept enamored with the fantasy they spin.

With all that silicon valley, it’ll probably be pushed more to do those things regardless of its hallucinations and accuracy lol.

“You asked me for a hamburger, and I gave you a raccoon.”

I’m pretty sure a lot of people said something like “Hmm,maybe the automobile won’t replace horses.” after reading about the first car accidents.

Finding sources will always be relevant, and so will finding links to multiple sources (search results). Until we have some technological breakthrough that can fact check LLM models, it’s not a replacement for objective information, and you have no idea where it’s getting its information. Figuring out how to calculate objective truth with math is going to be a tough one.