DEF CON Infosec super-band the Cult of the Dead Cow has released Veilid (pronounced vay-lid), an open source project applications can use to connect up clients and transfer information in a peer-to-peer decentralized manner.

The idea being here that apps – mobile, desktop, web, and headless – can find and talk to each other across the internet privately and securely without having to go through centralized and often corporate-owned systems. Veilid provides code for app developers to drop into their software so that their clients can join and communicate in a peer-to-peer community.

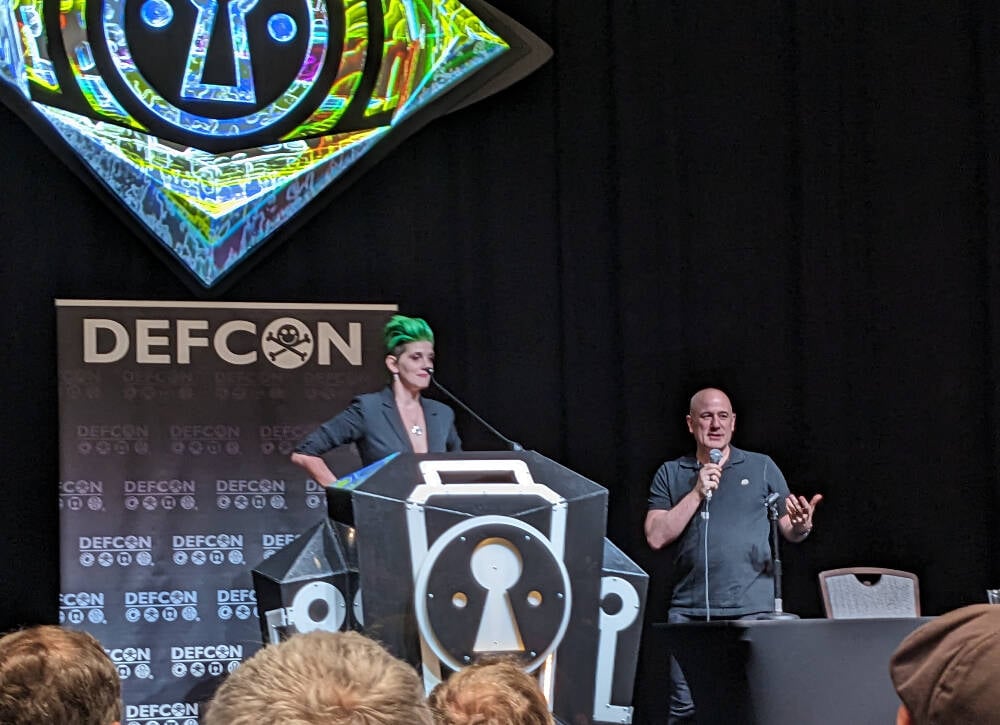

In a DEF CON presentation today, Katelyn “medus4” Bowden and Christien “DilDog” Rioux ran through the technical details of the project, which has apparently taken three years to develop.

The system, written primarily in Rust with some Dart and Python, takes aspects of the Tor anonymizing service and the peer-to-peer InterPlanetary File System (IPFS). If an app on one device connects to an app on another via Veilid, it shouldn’t be possible for either client to know the other’s IP address or location from that connectivity, which is good for privacy, for instance. The app makers can’t get that info, either.

Veilid’s design is documented here, and its source code is here, available under the Mozilla Public License Version 2.0.

“IPFS was not designed with privacy in mind,” Rioux told the DEF CON crowd. “Tor was, but it wasn’t built with performance in mind. And when the NSA runs 100 [Tor] exit nodes, it can fail.”

Unlike Tor, Veilid doesn’t run exit nodes. Each node in the Veilid network is equal, and if the NSA wanted to snoop on Veilid users like it does on Tor users, the Feds would have to monitor the entire network, which hopefully won’t be feasible, even for the No Such Agency. Rioux described it as “like Tor and IPFS had sex and produced this thing.”

“The possibilities here are endless,” added Bowden. “All apps are equal, we’re only as strong as the weakest node and every node is equal. We hope everyone will build on it.”

Each copy of an app using the core Veilid library acts as a network node, it can communicate with other nodes, and uses a 256-bit public key as an ID number. There are no special nodes, and there’s no single point of failure. The project supports Linux, macOS, Windows, Android, iOS, and web apps.

Veilid can talk over UDP and TCP, and connections are authenticated, timestamped, strongly end-to-end encrypted, and digitally signed to prevent eavesdropping, tampering, and impersonation. The cryptography involved has been dubbed VLD0, and uses established algorithms since the project didn’t want to risk introducing weaknesses from “rolling its own,” Rioux said.

This means XChaCha20-Poly1305 for encryption, Elliptic curve25519 for public-private-key authentication and signing, x25519 for DH key exchange, BLAKE3 for cryptographic hashing, and Argon2 for password hash generation. These could be switched out for stronger mechanisms if necessary in future.

Files written to local storage by Veilid are fully encrypted, and encrypted table store APIs are available for developers. Keys for encrypting device data can be password protected.

“The system means there’s no IP address, no tracking, no data collection, and no tracking – that’s the biggest way that people are monetizing your internet use,” Bowden said.

“Billionaires are trying to monetize those connections, and a lot of people are falling for that. We have to make sure this is available,” Bowden continued. The hope is that applications will include Veilid and use it to communicate, so that users can benefit from the network without knowing all the above technical stuff: it should just work for them.

To demonstrate the capabilities of the system, the team built a Veilid-based secure instant-messaging app along the lines of Signal called VeilidChat, using the Flutter framework. Many more apps are needed.

If it takes off in a big way, Veilid could put a big hole in the surveillance capitalism economy. It’s been tried before with mixed or poor results, though the Cult has a reputation for getting stuff done right. ®

I believe financial consequences can be very useful to make it expensive to spam or be abusive.

For example, for a user to access an app:

The user Reputation and distribution of fines:

That’s a very interesting approach, and may work for very specific applications. It seems unlikely most platforms or apps would adopt such an approach since it would be a very high burden to overcome for users to sign up, which is what let’s an app or platform grow. People are rather attached to money, but there’s also the other side of this. A user may not care about the fine and continue to break the rules as “the cost of doing business” because they’re wealthy.

It’s definitely going to be a technology that drives anonymity, and everything that comes with it, for better or worse. I can see a lot of good this can do, but also a lot of crime it can facilitate.

I agree. There is a potential barrier to entry, and growth. I argue:

The first point is the most important IMHO, privacy users accept the learning curve and inconvenience because they believe privacy is more important and because of this, I believe the burden is not as high as we think, that a ‘free to play’ alternative means of accessing privacy respecting apps (by this idea or something else) is as as essential to supporting and protecting privacy as E2EE vs server side encryption.